Regression analysis is a statistical technique used to analyze the relationship between one variable (the dependent variable) and one or more other variables (independent variables) using a set of data points. A regression model is a formal means of expressing a tendency of the dependent variable to vary with the independent variable in a systematic fashion.

In the context of a hedge effectiveness assessment, the primary objective of regression analysis is to determine if changes to the hedged item and derivative are highly correlated and, thus, supportive of the assertion that there will be a high degree of offset in fair values or cash flows achieved by the hedge. For example, if a $10 change in the dependent variable (i.e., the derivative) was accompanied by an offsetting $10.01 change in the independent variable (i.e., the hedged item) and if further changes in the dependent variable were accompanied by similar magnitude changes in the independent variable, there would be a strong correlation because approximately 100% of the change in the dependent variable can be “explained” by the change in the independent variable.

The use of regression analysis is more likely than the dollar-offset method to enable a reporting entity to continue with hedge accounting despite unusual aberrations that may occur in a particular period. The application of regression analysis allows isolated aberrations to be minimized by more normal changes in fair value that occur over the remainder of the periods included in the regression. However, the use of regression analysis is complex; it requires considerable effort to develop the models, and interpreting the results requires judgment.

Model inputs

The following are key considerations regarding inputs in the regression analysis.

Dependent and independent variables

In the regression model, the change in fair value of the derivative will likely be the dependent variable (Y) and the change in fair value of the hedged item will likely be the independent variable (X).

Data points

The objective of the regression analysis is to estimate a linear equation that best captures the relationship between the hedged item and the derivative. The inputs are a series of matched-pair observations for the hedged item and derivative. For example, the inputs could be the change in fair value of the hedged item and derivative observed weekly between January 1, 20X1 and October 31, 20X1. Thus, the first observation would be as of January 8, 20X1 and would include only the changes in the fair value of the derivative and the hedged item from January 1, 20X1 to January 8, 20X1. Subsequent observations would include only the changes in the fair value of the derivative and hedged item that occur during the weekly periods under observation (i.e., not on a cumulative basis). Use of cumulative changes has a propensity to create autocorrelation in the regression analysis, which may invalidate it. See the Other considerations section later in this section.

In calculating the data points to be used in the regression model, reporting entities should also decide whether to use a declining maturity approach (i.e., the remaining term of the hedged item and hedging instrument will vary at each data point because the maturity date is held constant) or a constant maturity approach (i.e., the remaining term of the hedged item and hedging instrument will stay constant at each data point).

- In a declining maturity approach, the reporting entity uses some previously-calculated data points by removing the oldest and adding more recent data points (keeping the number of data points the same each period).

- In a constant maturity approach, all of the data points are recalculated in each successive analysis as the remaining tenor or life of the derivative changes over time.

Time horizon

For prospective considerations throughout the life of a hedging relationship, the analysis should use observations selected on a consistent basis over a consistent period of time. The time horizon (period over which data points are gathered) should be relevant for the hedging period and statistically significant.

Number of data points

It is important to use a sufficient number of data points to ensure a statistically valid regression analysis. Generally speaking, as sample size increases, interpretation of the model and conclusions that can be drawn improve. We expect most regression analyses conducted to assess hedge effectiveness will be based on 30 or more observations, but fewer may be acceptable in certain circumstances.

ASC 815-20-35-3 permits a reporting entity to use the same regression analysis for both prospective and retrospective tests. The regression calculations should use the same number of data points, and the reporting entity must periodically update the data points used in its regression analysis.

Results

The following are the key metrics in a statistically-significant regression analysis.

- The R 2 statistic should be 80% (.8) or greater.

- The slope coefficient should be between -0.8 and -1.25.

- The F-statistic or t-statistic associated with the slope coefficient should be significant at a 95% or greater confidence level.

In addition:

- Unexpectedly large residuals, especially recent ones, may indicate an unusual period in the relationship.

- The possibility of autocorrelation should be considered.

R 2

The degree of explanatory power or correlation between the dependent and independent variables is measured by the coefficient of determination, or R 2. R 2 is one of the key statistical considerations when a regression analysis is used to support hedge accounting. The R 2 indicates the portion of variability in the dependent variable that can be explained by variation in the independent variable. Therefore, the higher the R 2 for a hedging strategy, the more effective the relationship is likely to be.

Although

ASC 815 does not provide a specific threshold for R

2, practice generally requires an R

2 of 0.80 or higher for a hedging relationship to be considered highly effective.

While the R 2 is a key metric, it is not the only consideration when using regression analysis to evaluate the effectiveness of a hedging relationship. Reporting entities should also evaluate the slope coefficient and the F-statistic or t-statistic, the statistical significance of the relationship between the variables.

Slope coefficient

The slope is an important component of a highly effective hedging relationship. The slope coefficient is the slope of the straight line that the regression analysis determines "best fits" the data.

Many regression analyses use the "least squares" method to fit a line through the set of observations (ordinary least squares regression). This method determines the intercept and slope that minimize the size of the squared differences between the actual Y observations and the predicted Y values (i.e., the vertical differences between plotted observations and the regression line).

The slope coefficient should be interpreted as the change in the derivative associated with a change in the hedged item. If the model is developed using the change in fair value of the derivative as the dependent variable (Y) and the change in fair value of the hedged item the independent variable (X), the slope equals the change in Y divided by the change in X, or "rise" over "run." In effective 1 for 1 hedging relationships, the slope coefficient will approximate a value of -1. In practice, many reporting entities apply a range of -0.80 to -1.25, as described in

DH 9.2.1.

The slope coefficient should be negative (except when the hedged item is represented by a hypothetical derivative in a cash flow hedge) because the derivative is expected to offset changes in the hedged item. In other words, to be an effective hedging relationship, the derivative and the hedged item must move in an inverse manner. If the analysis yields a positive slope coefficient, it means that when the hedged item goes up in value, the derivative goes up in value, which is not a hedge. If the hypothetical derivative method is used in a regression as a proxy for the hedged item, the slope of a regression line would be positive, since the actual derivative is compared to a hypothetical derivative, rather than to the hedged item itself.

F-statistic or t-statistic

An F-statistic or t-statistic associated with the slope coefficient is useful in determining whether there is a statistically significant relationship between the dependent and independent variables. In ordinary least squares regression analyses, the F-statistic is equal to the squared t-statistic for the slope coefficient. Generally, the result should be significant at a 95% confidence level.

Other considerations

Unexpectedly large residuals (relative to the predicted value or to other residuals) may indicate an unusual period in the relationship between the dependent and independent variables. In many cases when the regression analysis yields acceptable results, the residuals will not be important. However, residuals may signal declining effectiveness if the largest residuals come primarily from the most recent observations. Judgment should be used when interpreting declining effectiveness over time. The decline could be temporary, or it could call into question the effectiveness of the hedging relationship in future periods if the trend persists.

One of the assumptions underlying ordinary least squares regression is that the errors are uncorrelated. Correlated errors are referred to as “autocorrelation.” Autocorrelation may indicate that the regression model is not statistically valid because it can cause the R 2, F-statistic (or t-statistic), and slope coefficient to be misstated. In time series data, autocorrelation can be caused by the prolonged influence of shocks in the economy (e.g., the effects of war or strikes can affect several periods). Autocorrelation can also be artificially induced through the use of overlapping observations. For example, overlapping inputs would result if the first observation in a regression analysis is the change in value from January 1, 20X1 to March 31, 20X1 and the second observation is the change in value from February 1, 20X1 to April 30, 20X1. The use of overlapping inputs creates a dependency in the input variables because some months of each observation are the same and should be avoided.

Reporting entities should consider use of statistical procedures that are available to detect, and attempt to correct for, autocorrelation, such as the Durbin-Watson Test.

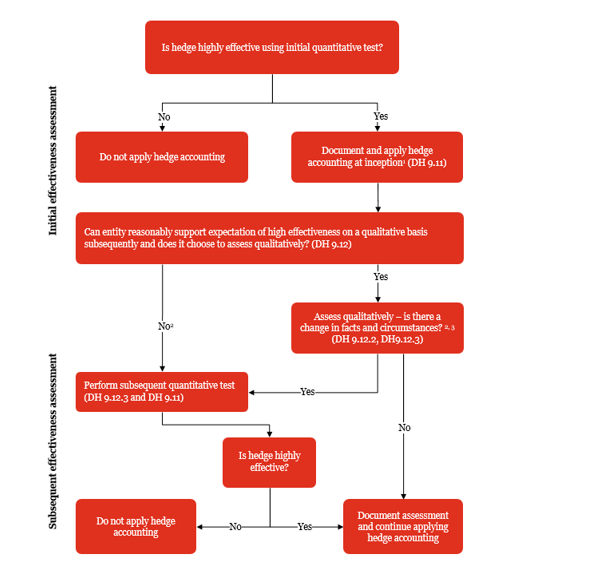

View image

View image